Since late February 2026, the MCP (Model Context Protocol) has been under fire.

“MCP is dead, long live the CLI”, reads the title of Eric Holmes’s now-viral article.

The CTO of Perplexity announced the internal abandonment of the protocol.

Garry Tan, president of Y Combinator, tweeted bluntly: “MCP sucks honestly.”

The OpenClaw project deliberately chose not to support it.

The grievances are well-known:

- explosive token consumption,

- immature authentication,

- questionable operational reliability.

And they are legitimate — within a very specific architectural model. One that we have never adopted at Agora.

The real problem: The LLM in charge of MCP

The pattern everyone is attacking is that of the LLM-as-router. The schemas of dozens of MCP tools — their descriptions, parameters, and constraints — are injected into the model’s context. The LLM must then decide which tool to call, with which arguments, and in which order. This is the dominant pattern in today’s AI agent ecosystem.

And this is precisely where the criticism hits its mark.

Cloudflare measured the gap on its own API: 1.17 million tokens to expose its 2,500 endpoints via native MCP schemas — more than the full context window of the most advanced models. Even keeping only the required parameters, the count remains at 244,000 tokens.

Their solution, Code Mode, brings this down to ~1,000 tokens through two generic tools (search() and execute()), representing a 99.9% reduction.

When every token carries a cost in latency, energy, and money, the native MCP equation no longer holds.

Add to this the risk of error: an LLM choosing from 50 tools may call the wrong one, fabricate parameters, or trigger a destructive action based on a misunderstanding.

The diagnosis is correct. But the conclusion — “MCP must be abandoned” — confuses the protocol with an architectural pattern.

Our Approach: Separating understanding from execution

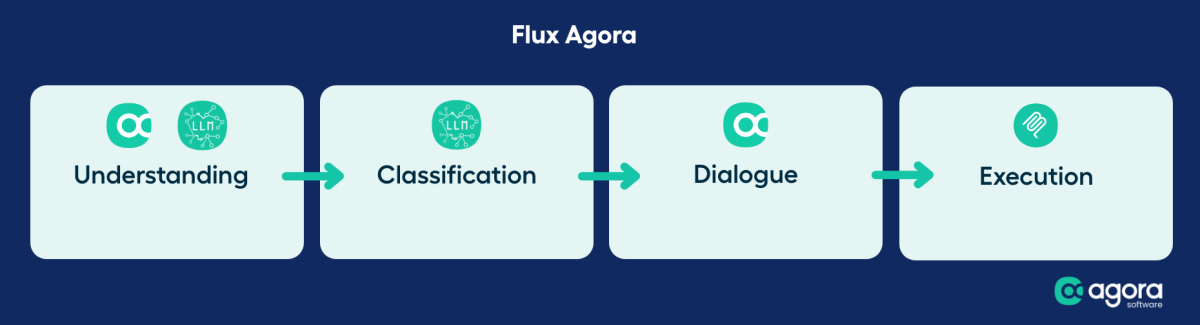

A clear separation between understanding and execution (MCP)

At Agora, we made a fundamentally different architectural choice from the very outset when designing our agentic platform.

The LLM never sees the MCP schemas. It does not choose which tool to call. It does not construct the parameters of an API call.

The flow works as follows:

The LLM gets involved on what it does best: understanding natural language.

- It classifies the user’s intent (“I want to submit a leave request”, “show me my January payslip”),

- It extracts mentioned entities (dates, names, amounts), and detects conversation follow-ups.

MCP execution driven by a DSL and a dedicated SDK

Our SDK, driven by a declarative DSL, then takes over.

A DSL — Domain-Specific Language — is a specialized language for a specific domain. Unlike a general-purpose language such as Python, a DSL expresses business rules concisely and readably.

In our case, this DSL is injected into the LLM’s context as a structured prompt: it describes the intents recognized by the agent, the expected parameters for each intent, and the dialogue rules to follow when collecting them.

What is the key difference from the LLM-as-router pattern?

Instead of injecting hundreds of MCP tool schemas into the context and asking the LLM to choose the right endpoint with the right parameters, we provide it with a targeted business grammar. The LLM only needs to understand what the user wants to do — not how the API works.

This prompt specialization makes classification considerably more stable and greatly reduces the cognitive load placed on the model.

Le résultat : moins de tokens en contexte, moins d’ambiguïté, moins d’erreurs.

The result: fewer tokens in context, less ambiguity, fewer errors.

Once the intent is classified and the entities extracted, it is the SDK — on the code side — that knows which intent maps to which MCP call. And it orchestrates a collection dialogue when information is missing:

- “When would you like to request leave?”

- “Is this paid leave or a day off in lieu?”

The MCP call is only triggered once all arguments have been collected and validated. Not before.

What this changes in practice

Tokens used to understand, not to route

In the LLM-as-router model, the bulk of the token budget is consumed by tool descriptions injected into the context — before the user has even asked their question.

In our system, the LLM’s context contains the conversation history and the classification prompt. The MCP schemas are not included.

Result: significantly reduced token consumption per request, which directly translates into greater processing capacity on our local inference infrastructure.

Guaranteed argument collection

The classic pattern relies on the hope that the LLM will correctly extract all parameters from the user’s message in a single pass. When a required parameter is absent, the behavior is unpredictable: a fabricated parameter, a partial call, or a silent failure.

Our system of automated dialogues detects missing arguments and engages a structured conversation to collect them.

Disambiguation (“you have two managers — which one?”) and confirmation (“I am going to submit leave from March 15 to 22, is that correct?”) are steps in the workflow, not emergent behaviors of the model.

Authentication: a false problem

Among the recurring criticisms leveled at MCP, authentication comes up consistently.

Eric Holmes sums up the prevailing sentiment: “why should a protocol for giving tools to an LLM concern itself with authentication?”

CLIs rely on proven mechanisms — aws sso login, gh auth login, kubeconfig — and they work.

But this criticism conflates two distinct things:

- the immaturity of auth implementation in the community MCP ecosystem, and

- the protocol’s intrinsic ability to integrate with robust authentication solutions.

In fact, MCP, as a JSON-RPC-based protocol, does not need to reinvent authentication. It can — and should — rely on the proven standards that already exist: SAML for enterprise SSO, OAuth 2.1 for access delegation, OpenID Connect for federated authentication.

The protocol’s 2026 roadmap is moving in this direction, with the integration of OAuth 2.1 and support for streamable HTTP transport.

At Agora, the authentication of MCP calls is handled upstream by the platform. The SDK controls the full chain: user authentication, rights verification, and transmission of a validated identity context to the MCP server.

The conversational agent therefore never handles credentials directly. And this is not a limitation — it is a separation of responsibilities. The same principle that ensures a controller in a web application does not manage JWT tokens directly: it delegates to an authentication middleware.

In practice, organizations struggling with MCP auth are typically those attempting to have the LLM itself manage authentication, or those deploying community MCP servers without an upstream security layer. In a well-controlled architecture, authentication is a problem that has long been solved.

MCP as an interoperability standard, not an agent engine

The current debate pits two caricatured visions against each other: “MCP everywhere” versus “MCP nowhere.” As is often the case, the reality is more nuanced.

MCP remains an excellent interoperability standard between an AI platform and the business applications it needs to operate. It provides a structured, versionable, documentable interface contract.

For a publisher of HRIS, ERP, or CRM software looking to make their application accessible via a conversational agent, MCP provides a clear framework — far more so than “expose a CLI and let the LLM figure it out.”

What is problematic is not MCP as a protocol. It is the architectural pattern of exposing the entire MCP surface to the LLM and entrusting it with routing and parameterization decisions.

- Separate natural language understanding from action execution.

- Give the LLM the role of understanding the user.

- Assign deterministic code the role of driving the calls.

And you get an MCP that does exactly what it was designed to do: a reliable integration standard between systems.

MCP is not dead

MCP is not dead. But the naive architecture that leaves the LLM alone in command — choosing the tool, guessing the parameters, triggering execution — deserves the criticism it receives.

At Agora, we build conversational agents for business software publishers who handle sensitive data on sovereign infrastructure.

Entrusting MCP routing to a probabilistic model was never an option. Our stack relies on an LLM for understanding, a DSL for routing, dialogues for collection, and MCP for interoperability.

The result: fewer tokens consumed, reliable actions, protected data, controlled authentication, and a protocol that does what it is asked to do — nothing more, nothing less.

_____________

Are you a software publisher? We hope this article has shed light on our conviction: MCP is not to be discarded — this is a matter of usage, not of design or performance.

Agora Software is the publisher of AI solutions dedicated to software editors. We rapidly deploy agents that enhance the user experience of applications and platforms.

Would you like to integrate agents into your applications? Let’s talk: contact@agora.software

Enjoyed this read? You might also enjoy our article dedicated to local inference.

Join us on our LinkedIn page to follow our latest news!

Want to understand how our conversational AI platform

optimizes your users’ productivity and engagement by effectively complementing your business applications?